Tag: machine learning

-

An “Alien” of Extraordinary Ability

A candid story about EB1A – legal immigration to USA.

-

Github Conversations: Characterizing Framing & Measuring Openness

Measuring openness in Github conversations

-

Select Additive Learning: Representation Learning for Small Datasets & Identity Bias Mitigation

Select Additive Learning: Representation Learning for Small Datasets & Identity Bias Mitigation

-

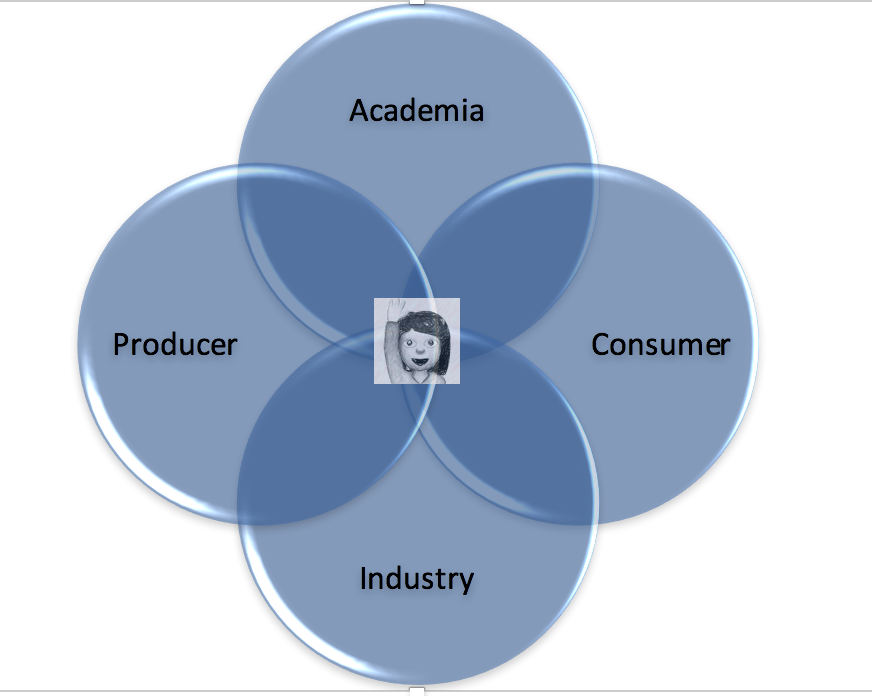

3 years of Swimming. In Data.

As 2016 is *finally* coming to an end, a year whose repercussions will be felt for many more to come, I cannot but help reflect on the past few years that I have spent swimming. Swimming in data that is. I have had the opportunity to be a productive participant in the field of data…